When a tiny piece of space junk cut a 5mm hole in the International Space Station’s (ISS) robotic arm last year, it came as little surprise. The near-Earth space environment has become so clogged with defunct orbiters and scraps of wreckage orbiting at up to eight times the speed of a bullet that NASA estimates half a million dangerous pieces of debris the size of a marble or larger (1cm plus) pose a constant threat to working satellites, whose number is growing rapidly as well.

The US Department of Defense’s global Space Surveillance Network tracks around 27,000 pieces of space junk, primarily through standard ground-based optical observations and radar systems. But that is all – we are completely blind to the vast majority.

Moreover, Gregory Cohen from Western Sydney University (WSU) explained that the accuracy with which we can track objects in space is alarmingly poor: ‘Close conjunctions between satellites have error margins in the tens of kilometres,’ he said. ‘We don’t really know just how close some of the close-calls have been, and, more worryingly, we don’t know how many close-calls we have missed altogether.’

Cutting-edge photonics technologies have the potential to change this situation and bring the near-Earth space environment into focus, making it possible to accurately track and trace space debris, and even potentially course-correct dangerous objects on a collision course with satellites.

Inspired by the eye

Cohen and his team are developing a novel event-based camera system named Astrosite. From the outside, Astrosite is underwhelming, consisting of three relatively small 10- to 16-inch telescopes protruding from a standard 20-foot shipping container. It is how the latest modified event-based cameras from both Inivation (DVXplore) and Prophesee (generation four Metavision) attached to the telescopes ‘see’ the sky that makes Astrosite ground-breaking.

Neuromorphic event-based cameras overcome limitations in terms of exposure times and saturation by taking inspiration from how the human eye and brain function. ‘What makes these cameras different is that they don’t integrate, and each pixel in the sensor operates independently and asynchronously from one another,’ explained Cohen, giving the sensors unprecedented resolution in time and dynamic range of intensity. Unlike conventional CCD or CMOS cameras that work by taking long integrations to detect objects, each pixel of the event-based cameras only detects the change in light intensity falling on it. This allows the Astrosite system to only collect information when something changes in its field of view, like when a satellite or other object soars past.

In regular imaging, either the object being tracked appears as a streak and the background stars are points, or the stars are streaks and the object is a point. Using Astrosite, Cohen’s team can track clearly discernible objects progressing across the camera’s field of view without the background stars becoming blurred.

‘This is a very different way of imaging the sky,’ he said. ‘We only get events from the objects of interest in the scene, meaning that not only do we get less data, but we also inherently get only the relevant data and we get it extremely quickly.’ What’s more, event-based cameras are far less affected by saturation than conventional cameras, allowing Cohen and his team to track objects during the day.

A final benefit came by accident. ‘When we were having trouble seeing things with the event-based camera, we’d often tap on the telescope to induce changes,’ recalled Cohen. ‘This horrified some of our astronomer colleagues, but it produced good results and the upshot is that we now actively embrace motion.’ This offers flexibility, meaning Astrosite does not require a stable platform and can continue imaging when on the move. ‘It allows us to go much faster and to do really interesting and novel imaging techniques,’ added Cohen.

So far, results from Astrosite have been encouraging. The team has managed to track various objects up to geostationary orbit, including a 3U CubeSat (10 x 10 x 10cm in size), and are actively looking at the best ways to contribute Astrosite’s data to the space tracking community.

The researchers have also started to develop the technology, including incorporating Astrosite at larger telescopes and even exploring a wide range of applications for event-based sensors in orbit – last year, WSU researchers customised and integrated Inivation’s Davis240C neuromorphic camera into the M2 CubeSat now orbiting Earth.

Guiding the way

Another promising method for accurately tracking space debris combines conventional large telescopes with laser guide star technology and adaptive optics (AO). Laser guide stars shoot a laser into the mesosphere. At 90-110km above the Earth’s surface, the light reaches a layer containing about 600kg worldwide of sodium left over from and constantly replenished by micro-meteoroids and asteroids burning up in the atmosphere. If the laser is tuned to the sodium frequency (589nm) and collides with sodium atoms, it causes a fluorescence, effectively forming an artificial star, or guide star.

Observing the guide star back at the observatory allows the aberration caused by the atmosphere to be measured. These measurements can then be plugged into the AO system, consisting of a flexible mirror warped by a slew of electrically driven actuators on the mirror’s underside, each applying a small deformation to the mirror surface on timescales of milliseconds. Observing an object of interest with a mirror pre-compensating for atmospheric turbulence brings it into perfect focus.

Military research laboratories and large astronomical observatories have been developing and employing such AO systems for decades. For example, Toptica Project’s SodiumStar is installed at the European Southern Observatory’s Very Large Telescope and Extremely Large Telescope, the Keck Observatory, Gemini South and North, and Subaru, among others, to remove the blurring effect of the atmosphere when viewing the cosmos.

But tracking space debris presents a unique challenge. ‘The apparent motion of stars is dictated by the rotation speed of the Earth, so pretty slow across the sky above the telescope,’ explained Celine D’Orgeville of the Australian National University (ANU). ‘Whereas a satellite or debris will cross the sky in a few minutes.’

Not only does this mean the components of the AO system need to operate faster, but the laser guide star needs to point ahead of the satellite a few arcseconds on the sky. ‘We measure what the atmosphere is doing ahead of the satellite, because by the time you’re going to apply the correction, the satellite will have moved,’ D’Orgeville said.

Western Sydney University’s Astrosite mobile telescope is built in a shipping container. Credit: Western Sydney University

D’Orgeville leads ANU’s Laser Guide Star adaptive optics activities undertaken at Mount Stromlo near Canberra. The Mount Stromlo Satellite Laser Ranging (SLR) facility, operated by Electro Optic Systems (EOS), is part of a worldwide network of approximately 42 SLR stations that provide millimetre-level accurate tracking for precision orbit determination and geodesy.

Some years ago, EOS expanded the SLR capability to use kilowatt-class pulsed lasers to track space debris to metre accuracy in three dimensions, providing the most accurate orbit measurement technique available. Measuring the time for the reflected light to return to the receiver provides range. ‘As validated by the US Air Force, we can track pieces of space debris down to about 3mm at 400km,’ explained EOS chief technology officer, Craig Smith. ‘As long as you can find them first.’

Accurate tracking is important to allow satellites and spacecraft such as the ISS to sidestep away from potentially dangerous junk. But defunct satellites and other large scrap in orbit cannot manoeuvre. ‘When a small piece of space debris hits a big piece of space debris, you end up with another 5,000 or so bits of space debris,’ said Smith. The worry for many is that at some point, a cascade effect known as Kessler Syndrome will ensue, causing so much distributed debris that it affects all space activities for generations to come.

To avoid this depressing fate and reduce the chances of collisions, EOS and ANU under the auspices of the Space Environment Research Centre (SERC) – a collaboration between the Australian Government, industry and academia – have been developing laser technologies to not just track junk but manoeuvre it with photon pressure.

The main difference between tracking and pushing debris lies in the concentration of laser power (power density) onto the target. In the photon pressure system, the pulsed 1kW tracking laser is replaced by a 20kW continuous wave laser running at 1,064nm, focused through the atmosphere onto the target by an AO system.

The AO system includes a sodium guide star laser created by combining two fibre lasers – one at 1,064nm and one at 1,319nm – through a sum frequency crystal to attain 589nm. Light from the guide star is collected by a wavefront sensor to measure atmospheric aberration, which is fed onto a 177-actuator deformable mirror that injects into the laser beam an equal but opposite in-sign aberration. A 1.8m telescope beam director is then used to deliver a pre-aberrated laser beam to the target.

This focused light imparts energy and momentum on the space debris, changing its orbit by a small amount. ‘We can change the velocity of the object by, typically, a millimetre per second – which isn’t much, given that the things are travelling at 30,000 km/h,’ explained Smith. ‘But that displacement accumulates over 24 hours, and you end up with hundreds of metres of separation along the orbit.’

The inside of Astrosite, a self-contained facility for tracking space objects using neuromorphic imaging. Credit: Western Sydney University

Unfortunately, SERC funding for the project ended last year, before any debris could be pushed in a real demonstration. But progress continues at EOS. ‘We’ve got most of the parts operating and we are trying to get the actual proof of principle demonstration completed,’ said Smith. ‘There’s still a lot of work in getting optical coatings on the delicate deformable mirrors that can withstand the laser power.’

Space-based solution

Though more expensive, a simpler approach to moving dangerous space junk could come from adding laser-based debris deflection spacecraft to humanity’s growing orbital armada. Such an idea is not new. A powerful space-based laser to ablate pieces of debris and thereby de-orbit them was first proposed in 1991. However, this technique is fraught with difficulty and risk. Not only are the required high energy (>10kJ) pulsed lasers for laser-ablative debris nudging currently unavailable, but the technique has the potential to create additional fragments during the procedure.

The European Space Agency and collaborators have explored a gentler method in recent conceptual and feasibility studies, proposing sending spacecraft into orbit that take advantage of the same photon pressure effect EOS is attempting to exploit at Mount Stromlo to slightly divert small pieces of dangerous space junk.

The system the researchers envisioned in one study named Orbit Laser Momentum Transfer (OLaMoT) consists of a moderately powerful continuous wave laser (4kW output power, 1,024nm) generating a well-collimated beam to exert photon pressure, a pulsed laser (1,310nm) for ranging, as well as a very high-resolution telescope to locate the particles and monitor the effects of illuminating debris.

‘The technical challenges are something that in a few years can be solved,’ said OLaMoT research manager Jouni Peltoniemi, of the Finnish Geospatial Research Institute. ‘What is probably the bigger challenge is that we need a good map of particles – most of the particles are unknown, we don’t know where they are.’

He continued: ‘Organisations are spending hundreds of millions of Euros to make good observation systems with radar, optical telescopes, laser telescopes on Earth, and planning new kinds of space-based systems. I hope there will be cooperation, because this is a global problem causing serious risk to everything that is in space.’

Quantum cascade lasers play their part looking for ‘NICE LIFE’ in a galaxy far, far away…

Looking up to the night sky filled with stars has had humanity dreaming, generation after generation, about our place in the vast universe. The question of life outside Earth is a mythical scientific interrogation on which two scientists in Switzerland are actively working. Dr Adrian Glauser and Dr Mohanakrishna Ranganathan, of ETH Zurich University, study possible places where life could exist – the so-called ‘exoplanets’.

When scientists look at exoplanets using telescopes, they get precise information – in the form of infrared (IR) light – about their composition and the possibility of life on these planets. However, a challenge is to sort out the IR light gathered by telescopes and remove misleading IR radiation sent by stars surrounding the exoplanet. In other words, scientists only need to focus on a small portion of the light sent by the exoplanet. By shutting out the information acquired by stars, the ETH Zurich experiment aims to help tackle this problem. Dr Glauser said: ‘We aim to extinguish the star’s light, to see the exoplanet.’

Because light is the primary source of usable information, Glauser and Ranganathan are developing an optical system that will prevent as much of the interfering light captured from the stars as possible.

Cold collection

They do this employing a technique known as interferometry, which blocks or nullifies the light captured by stars. Furthermore, to eliminate conflicting light, particularly IR light, the project will eventually gather light from deep space in a very cold environment – that is, a cryogenic environment, where the device will not emit interfering IR light.

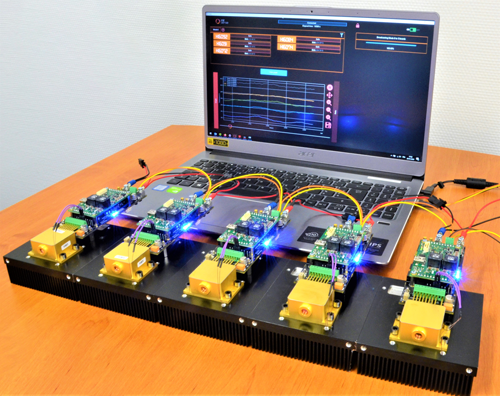

A mirSense quantum cascade laser was chosen for the experiment because it enabled the scientists to recreate starlight and produce IR radiation in the 4µm to 8µm range. A 4µm source was a good place to start at room temperature. A blackbody to mimic stars’ IR radiation at cryogenic temperatures is likely to be used later.

The QCL beam was divided into two spatially coherent beams in their lab to replicate the two beams acquired by two telescopes. To obtain as near the spatial resolution of a really large telescope as possible, they must replicate two telescopes (about 100m in diameter).

The optical power output of the QCL can be decreased by six orders of magnitude using this optical setup, ranging from 180mW to 180nW. A camera picks up the signal, demonstrating that the interferometer works and can block IR radiation using the double slit experiment method.

This project is known as ‘Nulling Interferometry Cryogenic Experiment’, or ‘NICE.’ When added to the mother project at ETH regarding exoplanets, ‘The Large Interferometer for Exoplanets’ (LIFE), you get a nice acronym of their project: NICE LIFE.

Quantum cascade lasers are an advanced technology, resulting from the conquest of space, precise at the atomic scale. A compact, lightweight and robust solid state laser source emitting in the mid-infrared.

The QCL active region is based on a carefully quantum-engineered heterostructure, made of hundreds of nanometre-scale semiconductor layers, which creates a specific electronic density of states that leads to optical gain and lasing between cascaded electron sub-bands. The peculiarity of this concept is that the emitted wavelength only depends on the layer thicknesses, not on the constituent materials. Consequently, the same technology can produce lasers in a very broad spectral range (λ from 3µm to 20µm).

The design of the QCL structures – active region, waveguide and distributed feedback – is fully mastered at mirSense with proprietary modelling tools, thus ensuring a high agility and capability to respond to custom demands. mirSense is the only QCL manufacturer to exploit two different semiconductor technologies: the standard InP-based technology and the alternative InAs-based technology. It allows unprecedented extension of the accessible wavelength coverage of QCLs down to 3µm on one side and up to 20µm on the other side.

Photonic Solutions is proud to be mirSense’s UK and Ireland partner, providing a full range of quantum cascade lasers. MirSense is committed to developing and manufacturing cutting-edge laser technologies. This, combined with our mission of supplying best-in-class products backed by the best technical support, means we can provide you with the best service and QCL products available.

Further information: