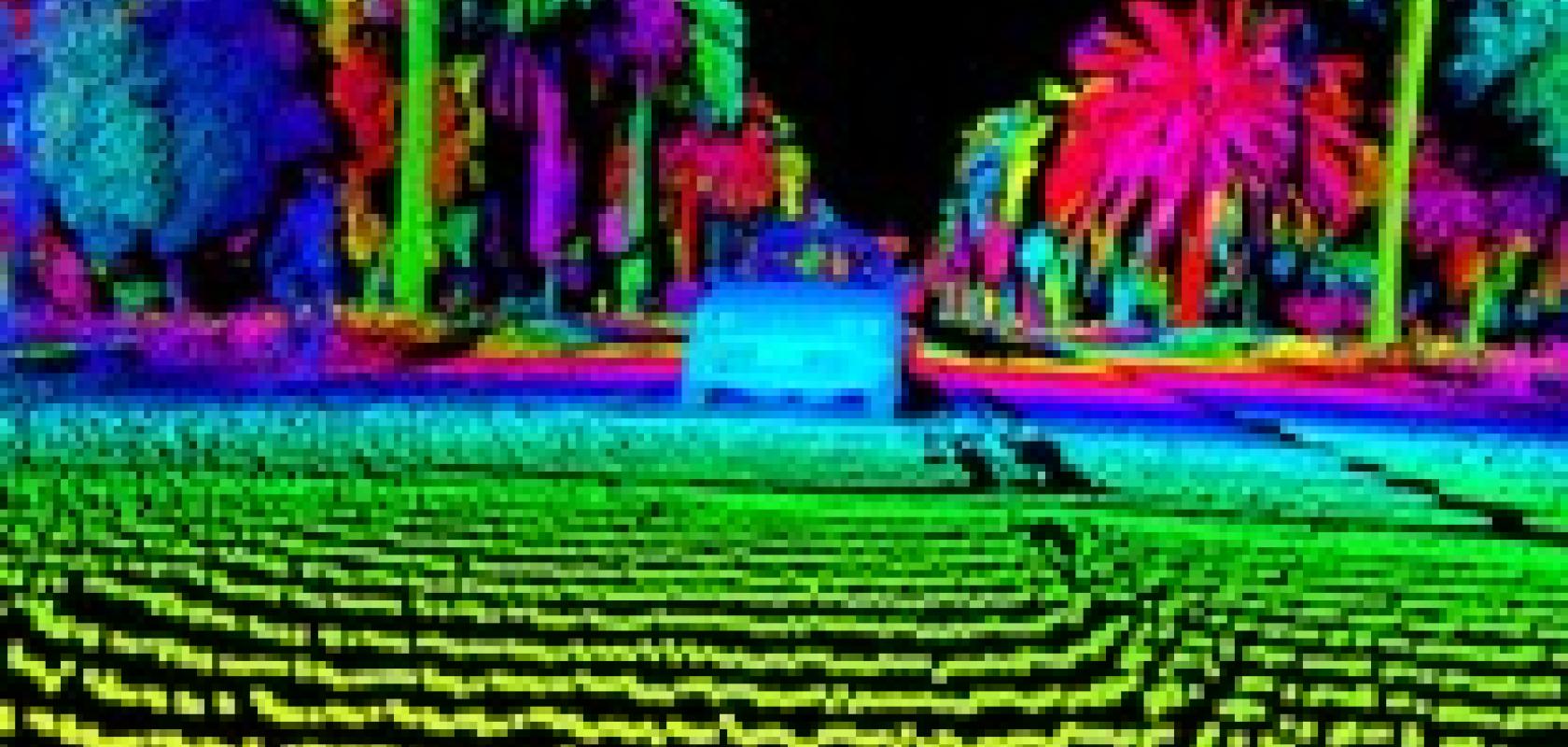

Various lidar companies are finding ways to meet the huge challenges automotive applications present. Jessica Rowbury discovers Luminar’s approach, and decisions made in designing their system

As one of the most important technologies for enabling self-driving vehicles, lidar has gained considerable attention in recent years.

In the last month alone, Israeli lidar developer Innoviz Technologies announced a partnership with HiRain Technologies, a Tier 1 automotive supplier in China; and Volvo Cars revealed it had acquired a stake in start-up Luminar. The global market for lidar in automotive applications is expected to reach $5 billion by 2023, according to a market report from Yolé Developpment.

Despite the heavy investment, most experts agree we are still years away from seeing fully autonomous cars on the roads. In order to reach level four or five autonomy, where there is minimal or no intervention from the driver, there are enormous technology and market requirements that lidar developers must overcome.

Various companies have launched their own lidar variations, each with differences in design factors, such as laser wavelength, detector architecture and measurement technique. One of them, Luminar – Volvo’s new partner – has developed a system that shows promise, not least of all in terms of range, cost and scalability. Jason Eichenholz, the firm’s co-founder and CTO, explained the reasoning behind the design of the company’s system during a talk at the Photonics West trade show, which took place earlier in the year in San Francisco.

Slow progress

During his presentation, Eichenholz argued that up until recently, there has been little progress with lidar technology. He referred to a competition in 2007, where several self-driving cars completed a 90km race course with no major accidents; a feat that would surely be commended by the lidar community even today. A 64-line lidar system from Velodyne was mounted on five of the six vehicles that finished the course. The challenge was held by the Defence Advanced Research Projects Agency (DARPA) of the US Department of Defence to see if autonomous vehicles could manoeuvre through urban settings with unpredictable traffic.

However, because of the challenges of translating this innovative technology into a marketable product that’s scalable, progress has been limited, Eichenholz noted. ‘If you look at the performance of lidar – and that 64-line system from 2007 specifically – the technology took a step back over the next decade,’ he said. ‘From 2007 to 2017, in order to get the price, size and power down, the customer had to endure an equally proportionate drop in vertical resolution to number of lines. You’ve seen some of the three-line systems in cars today, you see 16-line systems, and you see a high-end 32-line system. So over this period of time, you had to significantly reduce your ability to hit the price point and get the ball moving with supply.’

The DARPA Urban Challenge in 2007 saw cars with no driver manouvre round a 90-kilometre course. credit: DARPA

Technical requirements

To reduce the costs and size when the automotive requirements are so stringent is a challenging task.

In order for cars to ‘see’ with good performance, Eichenholz explained, a lidar system would need to identify objects from 200 metres away at 10 per cent reflectivity. ‘Because unless the government requires all of us to wear white clothing, and all cars to be white, you need to be able to see a tyre on a roadway, with sufficient spatial resolution, and you’re going to need to see it from 200m away,’ Eichenholz said.

Moreover, the system needs to be able to operate at least at 10 femtoseconds, and deliver more than one million points per second. It should be class one eye-safe, and have the algorithms and scanning architecture to deal with rain and snow. The unit also needs to be capable of operating for at least 10,000 hours. ‘The utilisation rates of these cars are going to go from three to five per cent to maybe 90 per cent – so the cars are operating for longer periods of time,’ Eichenholz said.

But perhaps even more critical is the need to limit interference, Eichenholz noted. The sensors need to be able to operate in bright daylight, but also in environments where there are other self-driving vehicles, which are all firing laser beams into their surroundings – possibly into the detectors of other cars.

‘I can’t stress the importance of interference enough,’ Eichenholz commented. ‘It’s not just the interference coming from you, it’s the car in front of you, shooting lidar directly at you; it’s the car from behind you, shooting over you, hitting the road sign ahead, which is reflecting and lighting up like a Christmas tree. Limited interference is critical,’ he said.

Along with the technical challenges, lidar developers have to be aware of the demands from the automotive industry, such as scalable architectures, a design that will fit onto varying vehicle types, and production scalability. ‘The latter means taking a lot of knowhow from the telecoms industry and how to put photonics components together,’ Eichenholz remarked.

Decisions and final architecture

The debate about the best wavelength to use in an automotive system – 905nm or 1,550nm – is a common one within the lidar space. Companies such as Velodyne and Innoviz use 905nm, which has the benefit of needing cheaper lasers and sensors. While other companies going for the eye-safe wavelength of 1,550nm, which allows more power to be outputted and reduces the number of components required.

Luminar chose the latter. ‘We looked at how many 905nm photons were required to effectively illuminate a 10 per cent reflectivity target at 200 to 250 metres,’ Eichenholz said. ‘We did the math of how many photons were required, the aperture size, the scan speed, analysed how we were scanning, and most importantly the point density. We quickly learned that we need to move to 1,550nm,’ Eichenholz said, adding that anywhere in the NIR eye-safe region (1,400-1,700nm) would have been acceptable, but 1,550nm makes the most sense because of the existing lasers and telecoms infrastructure.

‘[With 1,550nm] we now have a much larger – an order of magnitude larger – photon budget to use to design our system,’ he said.

After deciding on 1,550nm, Luminar looked at the detector. The firm decided on InGaAs after tests revealed that it offers ten times better sensitivity performance than materials like silicon or geranium, when using a 1,550nm wavelength.

However, the challenge was to integrate an InGaAs detector into a system in a cost-effective way. ‘InGaAs is expensive, consisting of lots and lots of linear arrays, which have really poor performance,’ said Eichenholz. ‘They needed to be CCD cooled in order to get the noise required. Even worse, the yields were bad, so you could have three or four dead pixels in just a 256 linear array. That’s not good if you’re trying to design a scanning system. In a 10-megapixel camera on a phone, if 50 are dead – no problem, you can stoop. You can’t do that in lidar.’

For this reason, for the detector architecture, Luminar chose to use an avalanche photodiode (APD) in a linear mode operation. This also improves the performance when in bright conditions or when there are other lidar-mounted vehicles on the road. ‘We have all the photons at once, which makes it much less susceptible to interference and sunlight going for linear APD,’ Eichenholz said.

On deciding the ranging method, Luminar looked at various approaches such as frequency-modulated continuous-wave (FMCW) and time of flight (ToF).

‘The number one limitation in any lidar system when you’re trying to do millions of points per second is that the speed of light is just too slow. We have to wait 1.3 micro-seconds for that light pulse to come back from the 200 metres, which really limits the ability to get higher spatial resolution,’ noted Eichenholz.

Most firms – including Luminar and Velodyne – use time of flight, where ultrafast pulses of light are sent out and the time to return is measured. However, the FMCW method is gaining traction. In March, Blackmore Sensors and Analytics announced it had raised $18 million via series B funding from BMW, BMW i Ventures and Toyota AI Ventures for its cost-effective FMCW lidar, which it says are critical differentiators in the field.

Unlike time of flight, FMCW uses a constant power laser beam and modulation of the laser frequency to perform simultaneous range and velocity measurements similar to modern radar systems. According to Blackmore, FMCW lidar is more sensitive and much less prone to interference.

However, time of flight is more established and, according to Eichenholz, the scanning method Luminar uses provides a way to cut interference and cost.

Because of the 1,550nm wavelength, where the lasers are more expensive, Luminar’s lidar has just two modules, each containing a single laser, detector and a mirror that sweeps the beam over the surrounding area many times a second. As a comparison, Velodyne’s VLS-128 system has 128 lasers operating at the cheaper 905nm wavelength, with 128 photodetectors, to capture the scene.

‘We see the world through a soda straw, and we scan back and forth,’ explained Eichenholz. ‘Only when I’m shooting at [another car’s lidar] and it’s shooting at me and we overlap do I have an interference problem, and we know there is an interference problem – we have ways to detect this – and we can signal that this is a bad data,’ he said. ‘So scanning allows a way to solve cost and interference.’

The scanning approach also allows the user and car to obtain additional spatial depth content where they need it. ‘With 64 lines over 30 degrees – if you look at the amount of lines you’re getting at 200m,

it’s not that many. But if we focus the resolution, it offers higher spatial content which allows safer driving,’ said Eichenholz.

With hundreds of millions already invested, lidar is proving to be one of the most critical elements for making self-driving vehicles a reality. And it certainly doesn’t seem like there will be just one type of system – with numerous firms taking different approaches, it will be interesting to see how the various lidar systems will fit into the cars of the future.

Related stories

Modernising the motorcar: Jessica Rowbury looks at how some of the technologies demonstrated at the Consumer Electronics Show in January will transform the driving experience