Lidar could become an important part of autonomous driving and potentially a huge market opportunity for laser and detector manufacturers, as Greg Blackman discovers at the Image Sensors conference in London

The Audi A8 is marketed as an SAE level three autonomous vehicle, one of the first models to reach this stage of autonomy. Level three is classified by the Society of Automotive Engineers as ‘eyes off’, meaning the driver can turn their attention away from the road but must be prepared to intervene when necessary. Level five is a fully autonomous vehicle.

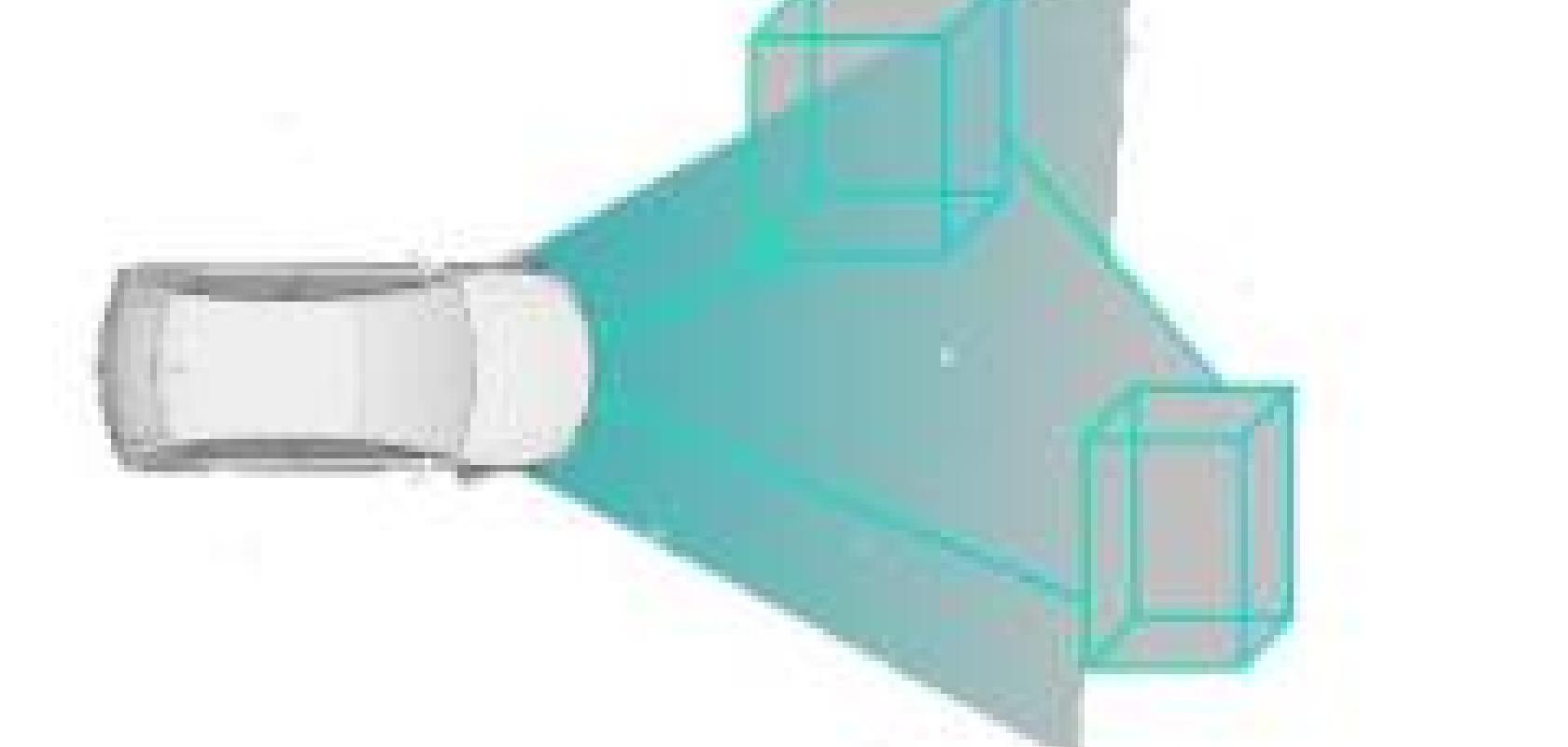

The Audi A8’s traffic jam pilot is able to take control on highways and multi-lane roads in slow-moving traffic at speeds of up to 60km/h. The car has 12 ultrasonic sensors, four 360-degree cameras, a front camera and a driver observation camera, five radar sensors, and a laser scanner.

Laser scanners might be part of the sensing suite of experimental autonomous vehicles like Google’s self-driving car, but there are very few automotive-grade lidar products for mass deployment. A report from Goldman Sachs Global Investment Research predicts that the market opportunity for lidar in automotive will grow from zero in 2015 to $10 billion by 2025, and $35 billion by 2030. Wade Appelman, VP sales and marketing at semiconductor firm SensL, noted that it’s rare to find a market that goes from $0 to $35 billion in 15 years. ‘Even if they’re [Goldman Sachs] off by an order of magnitude it’s still a large compound annual growth,’ he said, speaking at the Image Sensors conference in London on 14 March.

SensL is developing single-photon avalanche diodes (SPADs) and silicon photomultiplier (SiPM) detectors, which it says will enable lidar systems to operate at a range of 200 metres or more.

Oren Rosenzweig, co-founder of Israeli lidar system maker Innoviz Technologies, said at Image Sensors that the cost of lidar is prohibitive, and the performance is not good enough. Lidar needs to be able to detect objects 200 metres away, while also sensing small obstacles in the road at an angular resolution of around 0.1 x 0.1 degrees.

Founded in 2016, Innoviz has raised $82 million from investors, including Delphi and other Tier 1 suppliers. The firm has recently launched its off-the-shelf Innoviz Pro lidar system, which it is selling for R&D use and for retrofitting fleets of vehicles. It plans to release the Innoviz One automotive-grade lidar device for integration into vehicles at the beginning of next year, which it will sell to OEMs through its Tier 1 partnerships.

Rosenzweig, speaking to Electro Optics at the show, said that $1,000 per lidar system might be acceptable for certain early adopters of the technology, but that hundreds of dollars per lidar was needed to make it attractive to automotive OEMs. As the volumes increase, however, then costs will go down.

In his presentation, Rosenzweig said that no vehicles at the moment had reached SAE level three automation, including the Audi A8 which he still classed as a level-two vehicle, but that ‘we see real commitment from premium OEMs for the 2020/2021 timeframe for level three’.

Both Rosenzweig and Appelman agreed that lidar wasn’t the only answer for autonomous driving, and a fusion of lidar, cameras, and radar are needed to reach level three-to-five autonomy.

Innoviz’s technology is a solid-state lidar combining a MEMS scanner based on a micro-mirror designed by the company; the signal is processed in a proprietary ASIC. The Innoviz One has a 250-metre detection range, an angular resolution of 0.1 x 0.1 degrees, a frame rate of 25fps, and a depth accuracy of 3cm. The device is based on 905nm laser light; 1,550nm would cost too much for the lasers and detectors, Rosenzweig said.

Solid-state lidar uses primarily 905nm lasers, according to Carl Jackson, founder and CTO of SensL, although he added that 940nm VCSEL arrays are also being developed. Jackson said that SiPMs or a SiPM array can improve sensitivity and ranging compared to avalanche photodiodes (APDs).

Direct time of flight (ToF) is the most applicable method for long-range lidar, Jackson noted. Signal-to-noise and distance can be improved by sending out multiple laser pulses, with each pulse correlated in time. At 200 metres the systems have to detect almost single photons returning, which is why multi-shot lidar imaging is preferable over this range.

SensL’s first product for lidar is a 400 x 100 ToF SPAD array with high dynamic range SPAD pixels, optimised for vertical line scanning. It will be sampling in the second half of 2018. Jackson said that the sensor array can be used to create a lidar solution with 0.1 degree x-y resolution, suitable for greater than 100-metre ranging at 10 per cent reflectivity in full sunlight. SensL’s R-series of SiPMs can detect 10 per cent of the photons returning at 905nm – ‘that’s market leading’, Appelman stated. Jackson also noted that VGA-quality SPAD arrays could be available next year.

Eye-safety is all about the design of the system, according to Jackson. He said that a laser pulse of 1ns from a 5mm aperture at 30 degrees angle of view in the y direction can reach 26,908W of power and still be eye safe, ‘which is plenty of power to do long-range lidar with 905nm’. He added that a 120 x 30-degree system will need 6,000W of laser power to achieve 200-metre ranging with SiPM technology.

There are also challenges regarding the lifetime of components for automotive-grade lidar systems – automotive OEMs require 10-15 years. Rosenzweig noted that Innoviz’s micro-mirror operates outside the resonance frequency of vibration from the car to avoid product failure.

Lidar is just one part of the solution to reach level three-to-five driving autonomy; there are also regulatory requirements and cost requirements that need to be met, along with technical maturity of the systems. The computing power needed in these cars is extremely high, which is itself prohibitively costly. Then there are questions about how long it will take for artificial intelligence to reach the level of accuracy needed for full autonomy, which is still an open question.